Edge AI: The New Froniter

AI at your fingertips.

ARTIFICIAL INTELLIGENCEINTERNET OF THINGS

Jeugene John V

3/12/20263 min read

The Edge AI Paradigm

Artificial Intelligence (AI) has transitioned from a tech-industry buzzword to the primary engine driving modern business and consumer innovation. By leveraging higher performance, operational efficiency, and predictive consumer analytics, AI has become an indispensable asset.

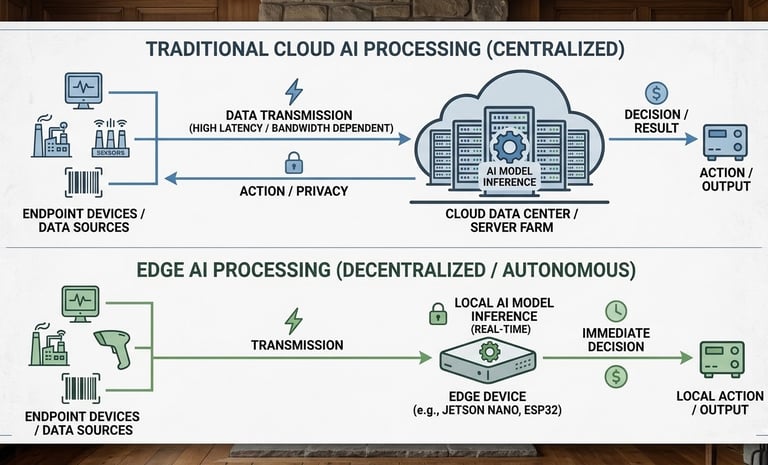

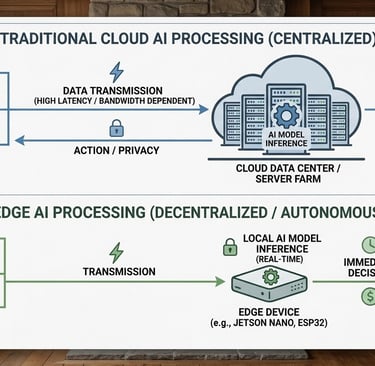

Historically, AI relied on Centralized Intelligence—large-scale server farms (the "Cloud"). In this traditional model, data from endpoint devices—such as medical monitors, industrial sensors, or retail scanners—is transmitted to a distant server for processing. While functional, this "round-trip" journey introduces significant bottlenecks in latency, security, and cost-efficiency.

Edge AI represents a strategic shift in this architecture. By moving the computation process to the "Edge"—directly on or near the source of the data—we eliminate the need for constant cloud dependency. This leap forward optimizes turnaround speed, fortifies data privacy, and drastically reduces infrastructure overhead. In the following sections, we will analyze why this decentralization is no longer just an option, but a necessity for the next generation of smart systems.

Why Edge AI?

While centralized AI has served as a foundational step, the "Cloud-First" approach introduces three critical vulnerabilities that modern enterprises can no longer ignore:

Prohibitive Operational Overhead: Traditional AI requires substantial and recurring annual subscriptions to maintain cloud infrastructure. As data volume scales, these costs grow exponentially, creating a "Cloud Tax" that can erode the ROI of even the most efficient models.

The Latency and Connectivity Trap: Mission-critical systems—such as medical diagnostics or industrial automation—cannot afford the "round-trip" delay of distant servers. Furthermore, relying on high-bandwidth, standalone connections leaves the system vulnerable to network interruptions, technical failures, and environmental disasters. True intelligence must be autonomous to be reliable.

The Privacy and Security Liability: In an era of strict data regulations, transmitting sensitive information from medical instruments or consumer devices to the cloud creates a massive attack surface. A single breach can lead to devastating financial loss and irreparable brand damage.

The mechanics of Intelligence

To understand how Edge AI revolutionizes industry, we must first look at how a machine "learns" to replicate human functions—whether it’s navigating an autonomous vehicle or optimizing logistics in a warehouse. This process generally follows two paths: Supervised Learning, where the model is trained on labeled data (like thousands of images of "parcels"), and Unsupervised Learning, where the model identifies hidden patterns without prior labeling.

The journey from a concept to a functional AI "brain" follows a specific three-step architecture:

Data Collection: The raw "sensory" input is gathered via endpoint devices, such as industrial sensors, high-definition cameras, or retail scanners.

Model Training: In this phase, programmers and data scientists use powerful computing clusters to put the model through repeated iterations. This is where the machine "studies" the data until it can perform the task with high accuracy.

Model Deployment (The Edge Leap): Traditionally, once a model is trained, it is hosted on a distant Cloud server. Every time an endpoint device needs a "decision," it must send data across the world and wait for a response.

The Edge Advantage lies in this final step. Instead of sending data to the Cloud, we deploy the model locally onto the device's own silicon. This transition from "Cloud Inference" to "Edge Inference" means:

Near-Zero Latency: Data travels millimeters, not kilometers. Decisions happen in real-time.

Data Sovereignty: Sensitive information stays within the four walls of the facility, ensuring that privacy and ethical standards are not just met, but are "secure by design."

The Future of Autonomy

The transition from cloud-dependent AI to Edge AI represents more than just a technical shift in latency and bandwidth; it is the ultimate enabler of professional autonomy. By moving intelligence to the "edge"—whether that is on a local device or within our own personalized daily protocols—we reduce our reliance on centralized systems and reactive workflows. This decentralized approach allows us to make real-time, high-stakes decisions with precision and privacy, ensuring that our performance is governed by intent rather than infrastructure.

As we look ahead, the most successful organizations will be those that embrace this "Autonomous Edge," empowering their teams to operate with the speed of local processing and the wisdom of global data.